Digital Fashion Interoperability

by Jendrik Poloczek, August 16. 2022

Permissionlessness and composability are crucial requirements for protocol primitives to become relevant. The area of possible application of an asset defines its overall utility value. Analog, digital fashion collections, metaverses, and protocols that enable interoperability will become more relevant. In our previous post, we highlighted the relevance of digital fashion. In this post, we will build up on that and start discussing the importance of digital fashion interoperability. From implementation-agnostic questions that are crucial to highlight going forward, we will explain Decentraland’s Linked Wearables approach as a case study of the latest advancements in production.

This is part 2 of our Digital Fashion mini-series. Read part 1 about “Fashion in the Metaverse” here.

Why Digital Fashion?

Fashion is more than functional clothing. Fashion is an extension and expression of our identity, or better: of all our different facets of our identity. With it, we participate in cultural conversation in private and public spaces, in the physical and digital realm. For the fashion industry, digital fashion has been an evolving research topic mostly covering its application in the rapid prototyping space. But only with the latest emergence of blockchain technology that enabled non-fungible tokens (NFT) is it possible to truly have scarcity, ownership, and therefore utility value in the digital realm. For an in-depth introduction to digital fashion and our investment thesis, we would like to refer to the previous article.

Different crypto-native metaverses

When we look at the crypto ecosystem’s big picture, we can see different design choices made and different ecosystems being more or less suitable for different use cases. The same goes for metaverses: The two most popular and active metaverses that are NFT-native are Decentraland and Sandbox. Both metaverses are very different in their environments. Decentraland’s entry point is an open source web-based application that successively loads low-poly 3D scenes from a decentralized persistence layer to construct the limited space around the avatar. Avatars can move around and interact with experiences deployed on land property. Sandbox, in comparison, is a metaverse that feels more like a game. The chosen graphic style follows a voxel approach that is known to many as similar to the video game Minecraft. The entry point is a desktop application based on the Unity game engine that communicates with centralized servers. Its go-to-market is shaped by its ability to aggressively re-iterate on metaverse mechanics. New and upcoming metaverses feature more realistic environments by employing e.g. the Unreal engine. This list of resources gives an overview of virtual spaces in the making.

Why is interoperability important

When we now shift the focus back to Digital Fashion it’s clear that we’ll see it in lots of different metaverses and augmented reality environments, and that owners would like to be able to use their digital couture in these different contexts. The utility value of the wearable spans from self-expression to signaling to gatekeeper functionality. It’s therefore in the interest of creators and owners to increase the utility surface for a given collection. In addition, interoperability to a wide range of environments, therefore reducing the dependency on one or few environments, increases the long-term permanence of fashion pieces.

How is interoperability achieved

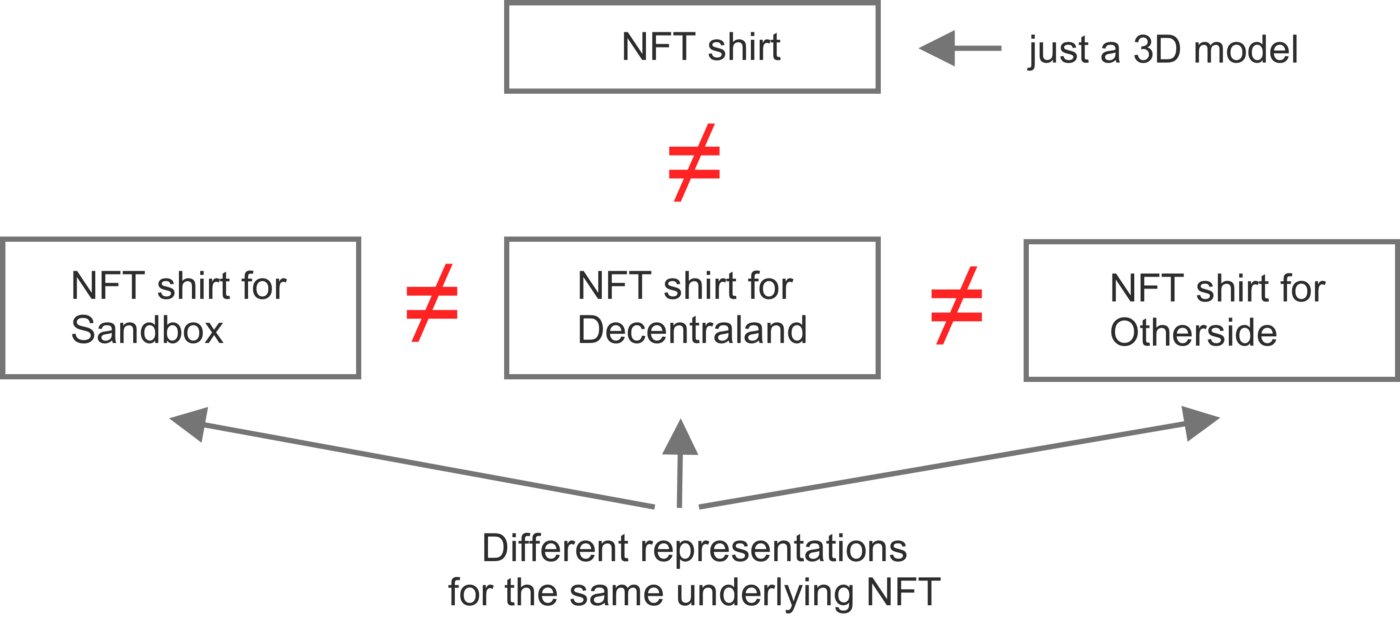

To discuss interoperability of wearables between metaverses we need to differentiate between semantic and syntactic interoperability. For this, Wikipedia gives a widely applicable definition that is useful as a framework for the discussion: Semantic interoperability is “the ability of computer systems to exchange data with unambiguous, shared meaning”. Whereas syntactic interoperability “refers to the packaging and transmission mechanisms for data”. The latter is hereby a prerequisite for the former.

Semantic interoperability

In the context of metaverse wearables, semantic interoperability refers to sharing the same meaning of these wearables in two or more different environments. For example, the meaning could include the visual expression of a piece, its item attributes in the context of certain games, or gatekeeper functionality. This depends highly on the environment/context in which the wearable will be embedded in.

When comparing the graphic styles of Decentraland and Sandbox one can easily argue that the visual expression of e.g. a virtual dress is limited by the graphical constraints of each metaverse. Therefore, a visual transformation (e.g. from low-poly to voxel-based) of the wearable between different environments without losing its meaning is needed. This could be done by metaverses, by fashion creators, or by a 3rd party intermediary protocol, Gravity Layer being one of them. We strongly believe that this problem will be addressed by future companies to allow (semi) automatic transformation as a new application of AI models.

Syntactic interoperability

Syntactic interoperability simply refers to the packaging (format) and transmission mechanisms for the data that is then interpreted (providing meaning). The two different categories here are content/metadata and ownership. The content is mostly stored in 3D graphics formats that have been in use in the fashion industry, the visualization industry, and video games. Each format has its characteristics and capabilities. A common practice is to store the original content in a feature-rich format and to reduce information into a simpler format if needed. The metadata can follow the same principle. Both content and metadata should be readable and interpretable (understanding the format).

Let us shift the view on wearable ownership. To make sure that a specific wearable is owned by somebody we need to be able to verify its ownership. This is usually done by the user providing a signature of her/his wallet account to the entry point application. This assumes that the wearable exists as NFT on the same chain as the user account. However, other mechanisms to check validity, as we will see below in Decentraland’s Linked Wearables, are possible too.

Generally speaking, interoperability can be achieved by different stakeholders: creators, metaverses themselves (represented by DAOs), or third-party middleware companies that work on behalf of the creators or the metaverses. The market power dynamics would hereby influence where the drive to interoperability will stem from. We see that currently, creators are working on semantic interoperability: re-fitting a wearable to different contexts or graphic styles, and metaverses work on syntactic interoperability making it easier for creators to use their already existing NFT collection e.g. as we will discuss below in the Decentraland’s Linked Wearables section.

We have discussed interoperability from very abstract and conceptual fundamentals. With this, we can now look at a concrete metaverse and apply the framework to understand design choices and take a snapshot of the current progress. To allow anybody with basic crypto knowledge to follow the discussion we will start with the basics of wearables in Decentraland.

Decentraland’s wearables

Decentraland wearables are concrete instances of items. Analogous to NFTs that have multiple editions, an item can have multiple editions, e.g. minting 500 shirts of a specific T-shirt item. These items can be bundled in collections for better organization. The underlying NFTs for each item exist on the Polygon sidechain. Here creators and members can mint, buy, sell or transfer items without paying much gas.

Each wearable can fall into the following categories that modify a certain part of the avatar: Body shape (shape of the entire character), Hat, Helmet, Hair, Facial hair, Head, Upper body (e.g. jacket or shirt), Lower body (e.g. pants or shorts), Feet, Skin. Also, accessories are possible that modify different parts: Mask, Eye Wear, Earring, Tiara (crown or something that sits on the head), and Top-head (something that is applied on the head, e.g. a halo).

The team behind Decentraland released 3D model templates for Blender, a popular modeling and animation tool, to fit wearables around different sections of the human avatar body. Wearables must adhere to content policy and technical restrictions, such as e.g. triangle count. After creation, Decentraland’s wearable editor can be used to preview, submit and manage wearables. In the submission process, the Curation Committee decides if the submitted collection will be accepted.

The next two sections will dive deeper into the technical aspects of achieving interoperability within Decentraland. Feel free to jump to the conclusion at the end.

Decentraland’s interoperability

Looking at the previous process of publishing wearables with an interoperability lens, we can easily identify where semantic and syntactic interoperability challenges might arise.

First, syntactic interoperability: the NFTs (the ownership logic of a certain wearable) on Polygon/Matic are specifically created for Decentraland. This limits the scope of interoperability for this concrete NFT only to Decentraland. Furthermore, this special Decentraland NFT has to exist on Polygon/Matic, therefore not allowing NFTs existing on other chains to have a wearable representation in Decentraland. The format used for 3D models and their animations is glTF (GL Transmission Format). The format has been chosen since it’s efficient and highly interoperable with modern web technologies. For Decentraland, animation has to be embedded into the glTF file. Furthermore, textures are either embedded or referenced.

In terms of semantic interoperability, Decentraland supports only a limited number of categories of wearables making two human body shapes the norm. A wearable on Otherside, worn by an ape, would therefore need to be re-fitted/translated to a Decentraland body shape. In the most extreme cases, wearables designed for one metaverse might not be able to be re-fitted to another, e.g. a fish metaverse to a human-avatar-based metaverse. The refitting is further obstructed by content policies and technical limitations of the respective metaverses. As an example of the former, one metaverse might care about copyright, but the other one doesn’t. As an example of the latter, one metaverse might only allow low-poly models whereas another metaverse allows high-resolution models. Bridging semantic gaps is not an easy task and involves context-dependent decision-making from the collection creators.

Decentraland’s Linked Wearables

Since the end of March 2022 a proposal, and the final definitions for Linked Wearables, previously known as Third Party Wearables, have been enacted. Linked Wearables are not regular wearables as discussed in the previous section. Linked Wearables are 3D representations of external NFTs by third parties outside Decentraland that can be used as wearables in-world. Therefore, they don’t have a rarity and can not be sold in primary and secondary markets. This led to the problem of mapping external NFTs to Decentraland 3D assets and how to authenticate owners. The former is solved by an on-chain registry that the committee governs, and the latter is solved by externalizing the task of authenticating NFT ownership to the collection creators.

For mapping, the “Third Party Registry” (TPR) is deployed, as are regular wearables, on Polygon. The purpose of this registry is to have an on-chain way to check if an item has been approved/rejected by a committee member, metadata, and the third party resolver, see its roles. The third-party resolver is an URL that is used in authenticating NFT ownership by collection creators. To identify Linked Wearables each item has a special id called the URN which includes the third party name, collection id (the third party NFT contract address is recommended), and the item id (the token id of the third party NFT contract is recommended).

For authentication of ownership, the collection owner has to provide an API endpoint that the Decentraland services will query to retrieve a list of assets associated with a given address and to validate if a Decentraland asset is owned by a specific member. The implementation of these endpoints can vary, e.g. data sources could be different e.g. TheGraph or a pre-populated database. This design allows wearable representations in Decentraland for any third-party NFT on any chain. It works because the collection owner becomes the central oracle whether an NFT is owned by a specific account on a foreign chain. But this simple and flexible solution comes at the cost of new problems: First, API availability has to be guaranteed by the collection owner. Secondly, as an owner of an NFT, you need to trust the API provider to properly reflect on-chain data. This iterative approach to decentralization favors speed and ease of integration over completely trustless systems that take longer to build and integrate. Linked wearables as a feature is just a couple of months old, and apart from single NFT studios or collections, we have not seen much traction yet.

Converging on standards

As elaborated in the article interoperability between metaverses is still in its infancy. But important bridges such as Linked Wearables are the first step towards it. We follow the discussions taking place in forums like the Open Metaverse Interoperability Group (OMI), the W3C Metaverse Interoperability Group, the Metaverse Standards Forum, and the NFT Standards working group.

Another standard is formed by well-funded joint ventures such as Yuga Labs and Improbable. Yuga Labs (the company behind Bored Ape Yacht Club BAYC) and Improbable (raised $150m to build the tech for massively scaling interoperable metaverses) collaborate on the metaverse Otherside and propose the Open Object Standard for in-metaverse items within Otherside and other metaverses which adopt it. It consists of a metadata ontology, a common standard for 2D images and 3D objects, and a universal scripting system currently based on Javascript. The metadata ontology makes it possible to enable common gameplay behavior such as tagging an object as “something to sit on” (semantic interoperability) so that the metaverse enables this functionality.

If you’re building a protocol or company that falls into the category of interoperability or digital fashion, or even both, please reach out to the deal team, we would be delighted to have a chat.